How to become a MediaWiki hacker/2011 Workshop

This page is obsolete. It is being retained for archival purposes. It may document extensions or features that are obsolete and/or no longer supported. Do not rely on the information here being up-to-date. |

A workshop to teach developers how to hack MediaWiki.

Allot three to four hours, including a 20-min biobreak in the middle. Works all right with 3-4 teachers and about 20 learners.

This page is currently a draft.

|

Originally taught to about 24 participants on Aug 2, 2011, Hecht House, Haifa, Israel (part of Developers' Days).

Overview

[edit]In this workshop, we will go over the basic coding toolchain and our code intake/review/merge/deploy/release workflow. We will cover the topics in "HOWTO Become A MediaWiki Hacker".

We will explain how one might change the desired behavior in MediaWiki for various purposes. We want to start with easy things, & work our way up to more time-consuming/pioneering work.

- User preferences, including Gadgets (JavaScript-based site extensions, requiring the Gadget extension to be installed. Especially good for eye candy.)

- Configuration options

- Skins

- Extensions (including Special pages, parser hooks, hooks in general, and parser functions & parser tags)

- Modifying MediaWiki core

Then we ask each person to choose a small task and spend some time, maybe 20-45 minutes, working on it. Experienced people will circulate, teams pair up, etc.

What to have prepared ahead of time?

[edit]Students should:

- If you are using Windows, you may want to install an Ubuntu Linux virtual machine by downloading the latest Ubuntu ISO file and VirtualBox

- Have a text editor such as vim, TextEdit, or Notepad++. Windows's default Notepad won't work and will cause Byte Order Mark errors.

- Install an IRC client

- Have an account on https://phabricator.wikimedia.org

- Install a Git client

If you have time, install MediaWiki -- download tarball, use installer. If adventurous, try downloading & installing the latest development version from Git, but don't worry.

Teacher: go through the event planning checklist. For example, tell Freenode the venue's IP so IRC doesn't get blocked, and put important URLs in a visible place (whiteboard, paper signs).

Poll at start of workshop

[edit]- Ask learners: what's your reason/interest/focus?

- Then, as you teach the workshop, point out areas of interest to those students in MediaWiki or Wikimedia. Example: tell Java people about Extension:CirrusSearch. When we talk about where the source code lives, tell RSS/API people where that code lives.

Syllabus

[edit]Intro resources

[edit]Help & discussion about MediaWiki development: #mediawiki connect on (IRC)

The most useful HOWTO for new developers is How to become a MediaWiki hacker and the most useful overview is Manual:MediaWiki architecture.

An example of a bug -- phab:T30296 -- and let's go through the bug comments to see the narrative of how a bug gets reported and fixed.

You can fix bugs! Our pools of bugs for beginners (but without mentors):

Or choose a software project with mentors instead.

How you give us code and how we review it and deploy it on Wikimedia servers

[edit]You write it: The process for new developers to contribute code: You download the source code from our code repository and make a change, then make a diff and upload it to Gerrit.

- Format: unified diff.

We review it: Then experienced developers review your code and either tell you how to improve it, or accept it into our code repository. Then there is an additional, more thorough code review step; we run automated tests to see if there's a problem, and someone visually inspects your change, and marks it as "ok" or tells you how to fix it.

We deploy and release it: Every few months, we take all the new code that's been marked "ok" and deploy it onto Wikimedia servers. Then we fix any bugs that arise and release a new version of MediaWiki (the "tarball").

Once you are a little more experienced, you can start running automated tests on your own work before you commit it, to find problems before you submit the patch.

- Q: Is there a place in Git where you can download a good working copy (without fixmes etc.)?

- A: No. But we do have Git branches for stable releases (eg. "REL1_37") and we have the downloadable tarball releases.

- Recommendation: Check out the latest branch that is being prepared for a release.

- Download from Git

- Tip: If you submit patches, they should be made based on the latest "main" or "master" branch to avoid merge conflicts.

- Do not submit patches based on a stable release branch or a stable tarball download!

- Time for a 20-30 min break.

User preferences

[edit]The easiest way to play around with MediaWiki is to change user preferences. And if you're running your own MediaWiki site, you can change/customize default values, like the default skin, etc. Examples: enhanced recent changes, gadgets!

Gadgets are a nice way for new developers (who know JavaScript) to start customizing their wiki experience, and eventually get involved in MediaWiki development. There's a detailed intro at Gadget kitchen and a detailed training document and video at Gadget kitchen/January 2012 training. And you can use jQuery (as of MediaWiki 1.17).

- Q: How is the JavaScript from Gadgets executed?

- A: The gadget extension is loaded on every page and checks if the user has the preference for this page is enabled. If that is the case, it loads the javascript as a <script> page.

The gadgets are only editable/publishable by wiki administrators and are stored as wiki pages on [[MediaWiki:Gadget-{gadgetname}.js]].

Inside the script a gadget maker can do things like "if ( wgPageName == 'Amsterdam' )". This way, the script will only be loaded on that page name.

- To "create" a new Gadget, the wiki administrator adds a list item on MediaWiki:Gadgets-definition. More about this is in Gadget kitchen.

- Since MediaWiki 1.19 gadgets are always loaded through ResourceLoader, which means each module executes in a local (new) scope by default.

Configuration options

[edit]There are also things you can do to configure MediaWiki, as the site's administrator, to customize how it runs. See Manual:Configuration settings.

Skins

[edit]"Skins" are what we call themes. The best-designed standard skin currently is "Vector" (now default, and you can modify it or write your own. For more information, see

Extensions

[edit]Extensions let you customize how MediaWiki looks and works. There are a bunch of kinds of extensions.

- More documentation is at Manual:Extensions and Manual:Developing extensions.

In Gerrit, there is a mediawiki/extensions directory. This is where extensions are. If you write one and commit it to the extensions directory, it will be translated by the translatewiki.net translators. Most of these extensions aren't suitable for the Wikimedia sites, either because they're specialized, unneeded (e.g. proofreading tools on Wikisource) or unsecure for sites like Wikipedia (for example a "Who is online" extension is more of a social extension, not for an encyclopedia). On any MediaWiki wiki, you can see the list of installed extensions by looking at your wiki's Special:Version page.

You can consider an extension fairly safe & stable if it's used on a Wikimedia production wiki (indicated by a box at the bottom of the extension's page -- example).

- Please look at the example extensions to understand how extensions should work in practice. In Git:

/mediawiki/extensions/examples/. Web-browsable version at https://phabricator.wikimedia.org/diffusion/EXAM/

You may need to learn Parser functions to do some of the things you want with extensions.

Special pages

[edit]It is fairly easy to write a small, simple "Special page". See Manual:Special pages for more details, including a template you can work from. A special page is just a PHP file - you can output whatever HTML you like to do what you want to do with it. There is example code in the "examples" Git subdirectory.

Magic words

[edit]A magic word lets you add your own wikitext tags to extend wikitext. Extensions can introduce both magic words and tags.

There are three kinds of magic words: Parser functions, variables and boolean triggers.

- Parser functions

Look like {{#something: argument}}

There are core parser functions included in core MediaWiki -- see Manual:Parser functions. Extensions can introduce their own parser functions, with the most popular one being Extension:ParserFunctions.

- Variables

MediaWiki etc.

- Behaviour switches (like

_TOC_would place the table of contents in that position, extension can add their own behaviour triggers (like_NOINDEX_).

Parser tags

[edit]Individual projects will often find it useful to extend the built-in wiki markup with additional capabilities, whether simple string processing, or full-blown information retrieval. Tag Extensions allow users to create new custom tags that do just that. For more, see Manual:Tag extensions

New tags can be introduced by an extension. For example,

<rss url="something" posts="20" />

could be a parser tag created by an extension to show recent posts of a blog inside a wiki page).

Tags cannot be nested, because the contents are handled by the tag function and not by the wikitext parser.

Hooks

[edit]A hook is something that calls a function whenever a specific event happens, and it can do things like notifying the IRC channel when a specific page is changed. More info: Manual:Hooks.

MediaWiki core overview

[edit]If none of the approaches above do what you want, you may have to actually alter MediaWiki core, which lives in trunk/phase3.

Everything comes in through index.php which dispatches to MediaWiki class, determines your action parameter (?), with logic handled in the article class, & that dispatches various aspects.

Check out the Specialpage class, which affects all special pages. You can thus modify Preferences, Contributions, Version, etc. Easy place to jump into code, because it's self-contained.

Here is the structure of the database schema.

- This is a good time to dive into hands-on bug triage or testing for people who don't want to contribute just yet, and Annoying little bugs work for people who want to dive in and code. One way to structure the workshop: this hands-on work would last for the rest of the workshop, and if one of the developers leading it felt the need to burst into a few minutes of lecturing (because a few people were having the same problem), that would be fine.

During the coding/workshop, if anyone is ready to install or to suggest a patch, be ready to explain the directory structure?

Important Git directories:

- https://gerrit.wikimedia.org/g/mediawiki/core/ MediaWiki core

- https://gerrit.wikimedia.org/g/mediawiki/extensions/ MediaWiki extensions

File structure overview of MediaWiki core ( https://phabricator.wikimedia.org/diffusion/MW/ ):

index.php

This is a small file that does some initialization and then goes off to WebStart. All article views, edits, actions, special pages -- all of it goes through this file.

- api.php

- ..

- /skins/

- ..

- /includes: the "php includes directory"

- ..

- /skins/

- ..

Contains default settings, global functions, and all classes (such as the Special pages, Wiki page actions (read, edit, etc.), ResourceLoader, API modules and lots more.

Example case: a reading list for a user to track what they've read or not in a given category and to make an educational game which asks questions about the pages that have been read.

- needs a hook to do something in the DB when a user reads something

- needs a special page to show the user what they've read

You could do this as a gadget, but you wouldn't have access to the database, so it would be simpler to do it as an extension written in PHP.

Permissions and vandalism

[edit]In MediaWiki everybody with the 'edit' right can make an edit. Users can use the 'undo' button from history page to undo any previous edit.

There is little to no vandalism detection in core, but there are many good extensions to help with this. For example Wikipedia uses CheckUser, Nuke, AbuseFilter, AntiBot, AntiSpoof, ConfirmEdit, GlobalBlocking, SimpleAntiSpam, SpamBlacklist, Title Blacklist, TorBlock, etc.

Side topic: using the MediaWiki action API

[edit]Bots

[edit]Bots are not in the MediaWiki source tree: they're programs that have (for example) Wikipedia accounts and use the action API to (for example) edit the text of pages or look at the recent changes. They are often written in Python (using the pywikibot framework) or in any programming language that can do HTTP requests (e.g., in PHP with cURL to the API).

See Manual:Pywikibot and API; there is a detailed tutorial at API/Tutorial.

Plugins to other systems

[edit]If you use the MediaWiki API you can create plugins that integrate Wikipedia or other MediaWiki installations into other apps or tools.

- Now the teacher has a choice -- code tour or individual hacking.

Start hacking!

[edit]Code Tour

[edit]Now the teacher goes over the general architecture of MediaWiki on a code level, covering important tables and classes.

- Note to teacher: you may want to show the students the execution workflow of a web request within MediaWiki.

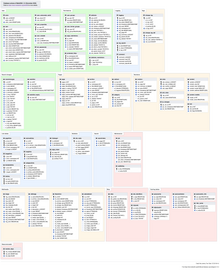

There is a lot of stuff in this diagram of MediaWiki's database tables! But you don't need to know most of it. We'll go over the important tables.

Tables

[edit]The Page, Revision, and User tables are the most important ones.

- 'page' table

Page table is the centerpiece of lots of interactions.

page_id- every page has a numerical ID

- exposed in the interface sometimes.

- autoincrement. Every page as it is created gets one.

page_namespace- Counterintuitively, the namespace of a page is a number, not a textfield. (Surprising. You'd expect the text to be somewhere.)

- Text is a configuration thing.... pages in the user namespace have a "2" in that field (includes/Defines.php).

- A separate file says that 2 means user. This is for localisation.

- And so you can rename namespaces. If you add a new namespace you have to give a number...

- it should be over 15 for core.

- For extensions adding namespaces: use something higher than 100

- 0-99 are "reserved" as core namespaces.

- Different extensions reserve diff namespaces. Semantic, for example, uses 100-199.

- Negative namespaces are special, don't try to use them)

- Wikimedia-specific namespaces are not in the main code

- see $wgExtraNamespaces — you can create your own.

- Many languages have "Portal", but not all, and it's not in the core.

- Example: on Lithuanian Wikipedia, for articles that are lists, they put those articles in a different namespaces.

- How high can you go? 32 bits. So 2 or 4 billion namespaces are possible for a single wiki.

- Every namespace should have an associated talk namespace.

- Subjects are even numbers and Talk namespaces are odd. If you break this convention, MediaWiki breaks!

page_title- title itself, stored without a namespace prefix.

- Example: for User:Sumanah the database stores: namespace=2, title=Sumanah

page_restrictionspage_counterpage_is_redirect- Is this page a redirect?

page_is_new- is it new?

page_randompage_touchedpage_latest- Relates to: Revision table! specifically, this relates to a row in that table.

page_len

- 'revision' table

Revision table records metadata for every revision, including creation.

rev_id- a unique ID for a revision

- sometimes visible in the interface as “oldid”

rev_pagerev_text_id- see the text the text table for the text of the edit

- ID, blob of text, & some flags. In theory, just text. In practice, gzipped (then the gzip flag would be flagged), or stored externally, etc.

rev_comment- edit summary

rev_user- user who made the edit

rev_user_textrev_timestamp- timestamp

rev_minor_editrev_deletedrev_lenrev_parent_id

- 'user' table

user_id- autoincrement ID

user_name- user's name

The rest of the stuff in a user record is all sorts of random metadata about a user.

- ‘recentchanges'

recentchanges is a separate summary table (although the data can be inferred from other tables) for performance reasons.

Classes

[edit]We'll show you the two most important classes, Title and WikiPage. For those and all the others, the best reference is https://doc.wikimedia.org/ which is always up-to-date.

- Doxygen documentation, similar to PHPdoc, but can be used in many languages.

- Autogenerated documentation, comments start with /** instead of just /*

- About a wiki page (Title class, WikiPage class)

Every page is uniquely identified in two ways:

- "page id", stored in

page.page_id

- "page id", stored in

or:

- "namespace & title" pair, that together are always unique (there can be two pages with the same title (Project:Foo, User:Foo, Category:Foo), but there can be only one with the same namespace + title pair.

- (A page like "Brion Vibber" could be a User page, Article, Category, or anything; the page title itself isn't enough to be unique within a wiki. You need the namespace to know exactly what the page is.)

- Title class

Inside MediaWiki, pages are identified through instances of the "Title" class, not by passing page_ids or namespace/title combos. So if an extension hooks into core functionality it will get the instance of the Title class for the current page (stored in the global $wgTitle) and can use methods like $mytitle->isRedirect() to see if a page is a redirect.

To create an instance of Title use any of the constructor methods such as Title::newFromId or Title::newFromText etc.

The Title class has several static utility functions to offer. Most used is makeTitleSafe. Always use safe methods when your input is coming from users, or if you aren't sure that it's sanitized. It may be ok to use non-safe methods when your input is coming directly from the database, through.

A Title-constructor may return null. This indicates that the title is invalid. Always check for nulls!

- Q: We see getters, but no setters. How do we set methods?

- A: That's not possible, title objects would otherwise be mutable/not be reliable. Besides, they represent a title, not a modifiable page.

On that subject: to actually touch the database and modify an actual wiki page, we use the WikiPage class.

- WikiPage class

On a high level:

$myTitle = Title::newFrom***( ... ); $myArticle = new WikiPage( $myTitle ); $myArticle->doEdit( ... );

Wrap-up

[edit]Time for Q&A!

- Q: There is an extension that calculates statistics -- how does it work?

- A: The view-counters are actually not an extension but part of MediaWiki core; the functionality uses

page.page_counterand there's a hit counter table. Whenever a user hits a page, it adds a row. That row is a temporary buffer and gets dumped to the hit counter table periodically. - Since large sites may not want to increment a database table field, it is possible to disable this feature (See $wgDisableCounters) (Wikipedia has disabled it).

We're happy to continue mentoring you in person or online (chat, mailing lists). Everyone on the core team once started out as a beginner!

What participants want/like about this workshop

[edit]They find it informative, even if they already know some of the material. They find the database overview and code architecture behind Wikipedia interesting:

- what makes it run

- process of being able to interact with the code

- bugtracking system

- how to check out code

- edit it

- check it back in

- what happens after that